Private Inference for Legal AI, When Trust Isn't Enough

Private inference is starting to matter in a way it didn’t six months ago.

Now this isn't because the technology suddenly appeared out of no where, but because the gap between what firms want to do with AI and what they are comfortable doing with client data is becoming harder to ignore as use cases move closer to the core of legal work.

Most current setups still rely on trust. Prompts are sent to a provider, contractual controls sit in the background and the assumption is that data is handled appropriately. That's reasonable for a large portion of work, but becomes far more difficult to justify when the material is (properly) sensitive, the matter is live, or there is any realistic chance that the work could later surface in disclosure.

That tension has growing for a while and private inference is one of the first approaches that addresses it at a technical level rather than a policy one.

What private inference actually does

At a basic level, private inference runs models inside isolated environments where even the infrastructure provider cannot inspect the data being processed. This is typically achieved through hardware backed enclaves, where data enters a sealed execution context, is processed and leaves again without being exposed to the surrounding system.

That is a different category of control to what most firms are used to. Current AI deployments are largely governed by policy, access controls and vendor assurances. Private inference shifts that into enforced isolation, where accessing the data requires actively breaking the boundary rather than simply having permission to view it.

This is why it has gained traction in areas like incident response and security research. Legal work shares many of the same characteristics, particularly where confidentiality, liability and reputational exposure sit close together.

Why this matters for legal workflows

Legal teams are already applying AI across document review, clause analysis, due diligence and drafting. The limiting factor has not been capability, it has been the question of where the data goes and who might be able to access it, even in theory.

The more valuable use cases tend to be the ones that are constrained:

- Internal investigations before any disclosure position is established

- Regulatory exposure analysis across internal communications

- Pre-transaction material with market sensitivity

- Litigation strategy where discovery risk is real rather than hypothetical

These are not difficult problems from a modelling perspective. The hesitation comes from sending that material to a third party and relying on policy as the primary safeguard.

Private inference changes that dynamic as it allows firms to move away from a binary decision and instead consider how strongly isolation should be enforced for different types of work.

Frontier models vs open models

There is a tendency to frame this as a simple trade-off between capability and control, but the reality is more nuanced.

Frontier models continue to lead on raw capability. They improve faster, handle edge cases more effectively and require less effort to deploy. That advantage is real.

However, the gap is often applied too broadly, many legal workflows are structured, repetitive and bounded by defined rules. They do not require the full reasoning depth of the latest model to deliver consistent results.

In practice, the pattern tends to be:

- Frontier models demonstrate what is possible

- Open models reach a comparable level within a relatively short period

- Most production use cases sit comfortably (well) below that upper bound

The problem is not that open models are incapable, it's that firms do not define their requirements precisely enough to understand what level of capability is actually needed.

Smaller models are often a better fit

Smaller models are frequently treated as a compromise, when in many cases they are better aligned to how systems are actually used.

They offer faster inference, lower cost and greater flexibility when embedded within workflows. More importantly, they are easier to constrain and reason about, which becomes critical once the focus shifts from individual outputs to system behaviour.

A larger model may produce a stronger answer in isolation. A smaller model, operating at speed and scale, can support repeated checks, structured processing, and consistency across a workflow. That often leads to more reliable outcomes overall.

The part most teams are still missing: evaluation

This is where the discussion becomes less comfortable, particularly for teams used to relying on vendor capability rather than internal validation.

Moving from a managed API to a model you control requires more than a deployment decision. It requires evidence that the system performs as expected, not that you shouldn't be evaluating anyway, but you're now making specific choices.

That includes:

- Clearly defined tasks with measurable success criteria

- Representative datasets derived from real work

- Repeatable evaluation processes

- Thresholds that are enforced rather than assumed

Without this, any comparison between models is largely anecdotal. Many legal AI initiatives still operate on perceived quality rather than measured performance, which is sustainable during experimentation but not once outputs become material to a matter.

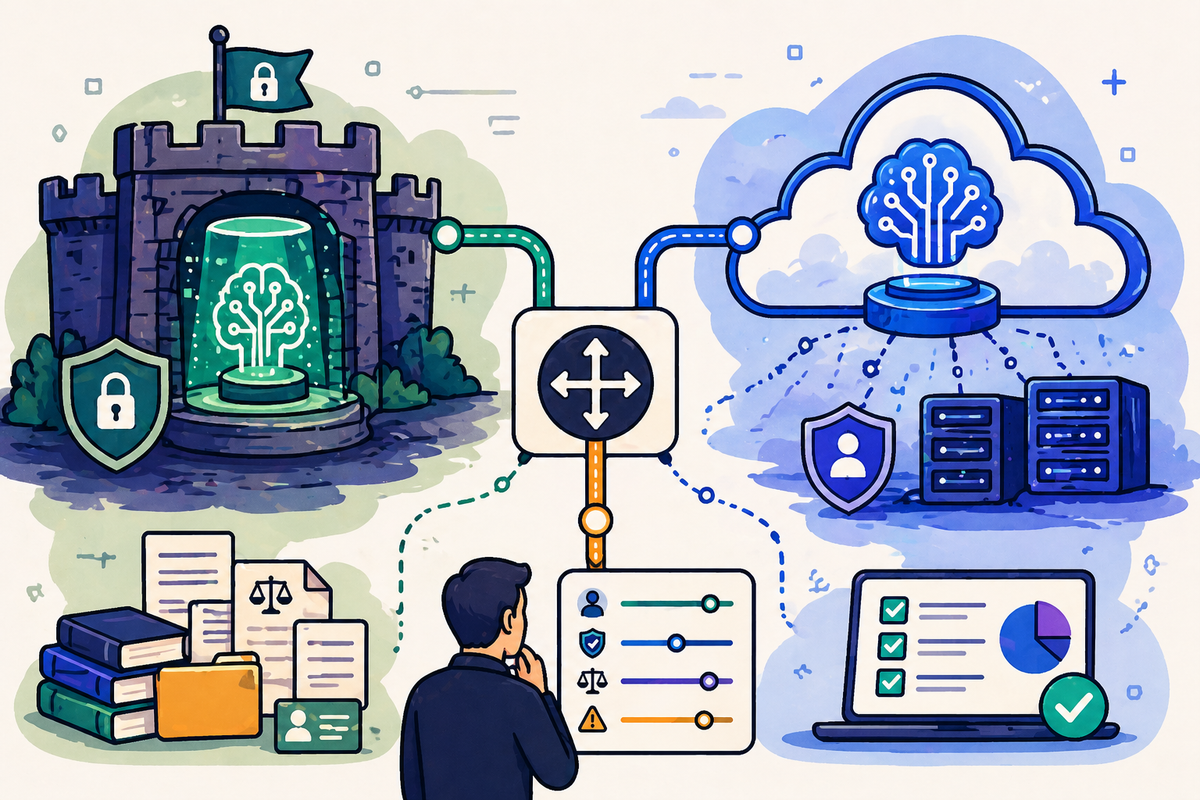

Where private inference fits

Private inference is best understood as part of a broader routing strategy rather than a standalone capability.

The system has access to context about the client, the matter, and the nature of the task being performed, and can use that context to determine where the work should run. Some tasks can be handled by standard cloud models without introducing meaningful risk. Others require stronger isolation, while some benefit from being handled by smaller models embedded within tightly controlled workflows.

The decision is not based solely on the content of a document. It reflects a combination of factors:

- The sensitivity profile of the client

- The stage and nature of the matter

- The type of processing being performed

- The potential downstream exposure, including disclosure and regulatory risk

For firms operating across multiple jurisdictions or handling particularly sensitive work, that broader context is often where the real risk sits. Private inference becomes one of several execution paths within a system that governs how AI is applied, rather than a separate environment reserved for exceptional cases.

The trade-offs do exist

Private inference introduces additional complexity. Access to the latest frontier capabilities may be delayed, infrastructure decisions become more involved, and responsibility for monitoring and evaluation shifts back to the firm.

It also requires a more disciplined approach to defining workflows and expected outcomes. General purpose capability is no longer sufficient, since systems need to be designed with clear boundaries and measurable behaviour.

Those constraints are not incidental, because they are what make the approach defensible.

Relying solely on contractual assurances while sending sensitive data to external providers is unlikely to remain a stable position, particularly as AI becomes more embedded in high-value legal work.

Private inference offers a different approach, one that aligns more closely with how legal services manage risk and confidentiality.

The more significant change is internal, firms will need to decide:

- Which types of work justify stronger isolation

- Where frontier capability is genuinely required

- What constitutes acceptable performance in measurable terms

- Who is responsible for validating and maintaining that standard

The firms that adapt effectively will not necessarily be those with access to the most advanced models, but rather those that can make deliberate decisions about how models are used and demonstrate that those decisions are grounded in evidence.

Private inference does not remove the need for judgement, it requires it to be explicit.