Open source isn’t a playground: a practical guide for legal AI builders

The last few weeks have produced a visible surge in open source legal AI projects, with repos appearing, forks multiplying, and tools being shared across communities that weren’t particularly active even six months ago.

That’s useful momentum, and it’s long overdue.

It’s also the point where habits start to take hold, and right now those habits lean in a particular direction: fork first, ship fast, maintain later. The cost of that pattern isn’t obvious at the start, but it does show up, and when it does it tends to arrive in ways that are harder to unwind.

This piece is about that cost, and what to do instead.

Open source in legal isn’t starting from the same place

The norms around open source contribution didn’t emerge from principle, they emerged from necessity, slowly over time.

Those working in teams at Google and Meta grew alongside open source infrastructure and, in doing so, helped shape how it works in practice. Shared ownership, structured review and long-term maintenance are not ideals in that environment, they are operational requirements.

There’s a practical reason for that.

Their systems depend on shared components, and once something sits deep enough in your stack, maintaining your own version stops being clever and starts becoming expensive in ways that compound over time. The Linux kernel is the clearest example. If you fork it and keep your changes private, you are committing to:

- reconciling every upstream update

- reapplying fixes manually

- carrying responsibility for security patches

Contributing back is not about goodwill in that context, it’s simply the only sustainable option.

That model does not translate cleanly into legal tech.

Law firms are not naturally open source contributing environments. In many cases, what gets built, whether that’s a workflow, a clause extraction approach, or a particular way of structuring data, is seen as part of the firm’s differentiation. Sharing that work upstream, even if it would benefit the wider ecosystem, can feel like giving away something that clients are paying for.

That’s a very different dynamic to contributing something like file format support to a project like Linux, where the incentive is clear: if your format is supported upstream, it works everywhere without you carrying the burden.

So the behaviour we're seeing, forking, keeping changes local, building slightly different versions of the same thing, isn’t just a technical instinct. It’s shaped by the environment legal tech sits in, where control, differentiation and speed tend to matter more than shared infrastructure.

Most people building in this space are also working in smaller teams, moving quickly, and aiming towards a demo or proof point rather than infrastructure that needs to hold up over time. In that environment, forking feels like the most direct path forward.

You get control, avoiding internal friction and move without waiting however the cost still arrives, it just tends to arrive later, and often in a form that’s harder to deal with.

The instinct to fork

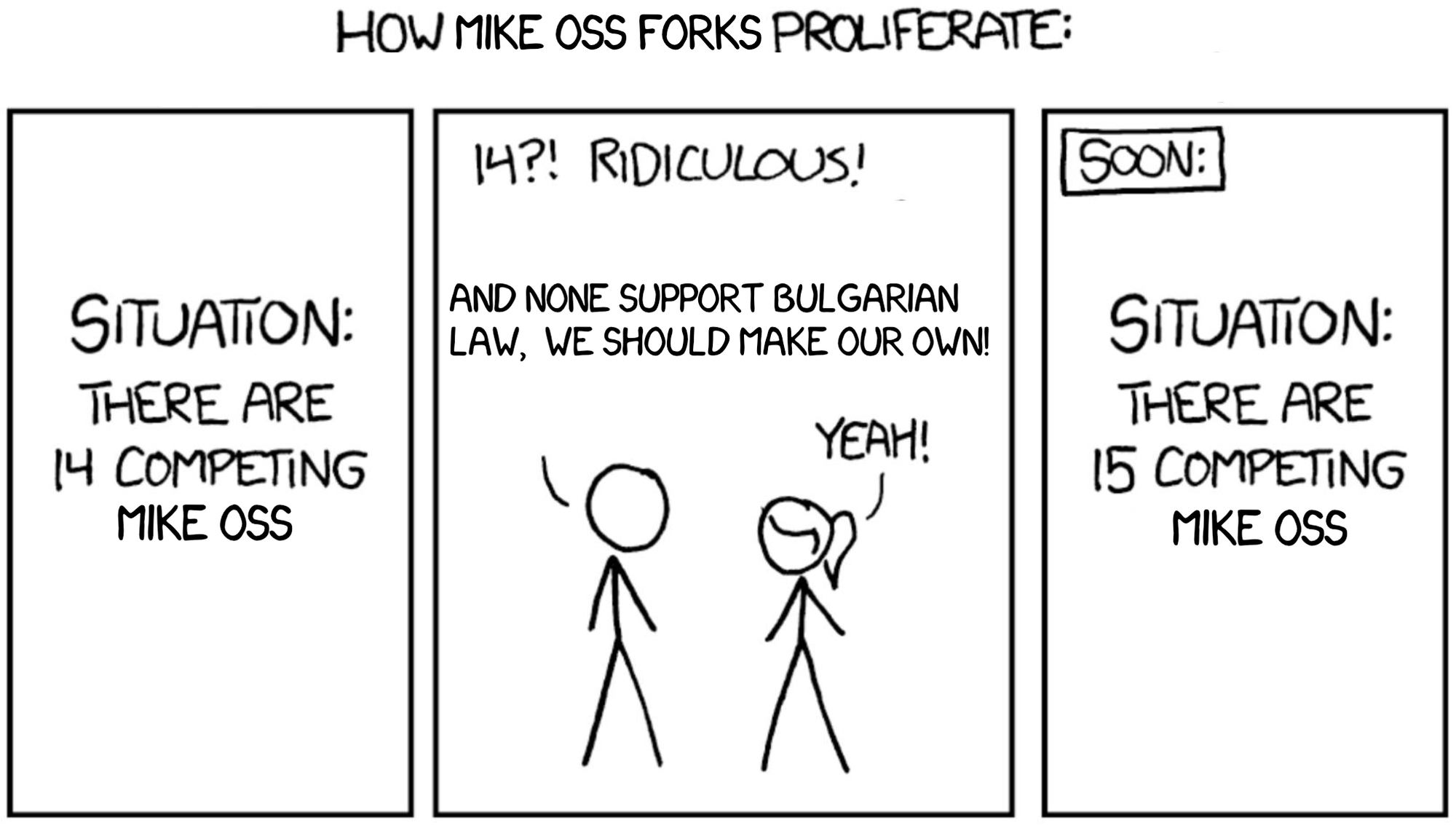

There’s a well-known xkcd comic about standards. 14 approaches exist, so someone creates a 15th to unify them. Now there are 15.

A similar pattern is starting to show up in open source legal tech projects.

A useful repo gets forked, a feature gets added, and a new project appears. A few weeks later there are several versions of roughly the same idea, each slightly different, none clearly maintained, and no obvious path for improvements to flow across them.

It looks like progress, but in practice it spreads effort rather than concentrating it. Most changes don’t require a fork, they require a pull request.

If you are improving an existing project, the default position should be to contribute back:

- fixing a bug

- handling an edge case

- adding support for a new input type

- improving performance

Forking in these cases splits effort and makes it harder for fixes, particularly security fixes, to reach everyone who depends on the tool.

A fork starts to make sense when you are changing direction rather than adding capability. That usually means:

- a different product goal

- a fundamentally different architecture

- licensing constraints

- a deliberate decision to diverge long term

That’s a higher bar than most forks meet.

At the weekend several Mike OSS forks moved away from Supabase to a local PostgreSQL setup with custom authentication, now that might be the right decision in some cases, but it’s the kind of change that deserves a pause to think.

If the goal is local deployment or control over data, existing paths already cover that. Supabase can be run locally with Docker and self-hosted in production, which means you retain the surrounding ecosystem, the authentication model, and the benefit of upstream updates.

Moving to a fully custom data and authentication layer brings in a different class of responsibility:

- session management

- access control

- data integrity and migrations

- ongoing security patching

That is not a small step, and it is rarely something that should be driven by what feels quickest in the moment.

The fork-versus-contribute question is also a product question

If you are building to compete with tools like Harvey or Legora, then forking in order to create something genuinely distinct can be the right decision, even when it is more expensive to maintain. A different deployment model, data architecture or compliance posture can be meaningful product choices.

The more useful question to ask before forking is not just whether you can maintain the result, but whether the fork represents:

- a product decision

- or an impatience decision

One is defensible... the other is where maintenance debt accumulates and tends to surface later, usually at the point the system starts to matter.

Security isn’t something you add later

"It’s just an open source prototype" doesn’t really weight once other people start using the tool.

If it:

- processes documents

- connects to external services

- runs inside a firm environment

then it carries real risk from the moment it is used, simple as.

You don’t need a full security function, obviously, but you do need a baseline.

A SECURITY.md file should cover:

- how to report a vulnerability

- whether that should be done privately

- what kind of response someone can expect

Beyond that, the basics matter:

- enable dependency scanning

- merge security updates promptly

- version your releases clearly

If known vulnerabilities sit unaddressed, people will assume the project is not safe and they’re usually right.

Ownership matters as well. Someone needs to own:

- issue triage

- pull request review

- release decisions

Just because it's open source does not remove responsibility from you.

The energy behind legal AI right now is real, and for the most part it’s useful and infectious!

The question is whether it builds into something durable or spreads into a long tail of tools that look promising but don’t hold up when they are relied on.

That outcome is less about individual technical decisions and more about the defaults people reach for.

At the moment, those defaults lean towards:

- duplication over extension

- shipping without a clear maintenance plan

- treating repositories as experiments rather than systems

Changing that does not require slowing down.

It requires:

- contributing upstream where a change does not justify a fork

- treating security as part of the system from the start

- being clear whether a fork is a product decision or simply the easiest path

The tools that will matter in this space in two years will not be the ones that moved fastest over the next six months, they will be the ones someone chose to maintain.