Legal AI Needs Degradation Curves, Not Just Benchmarks

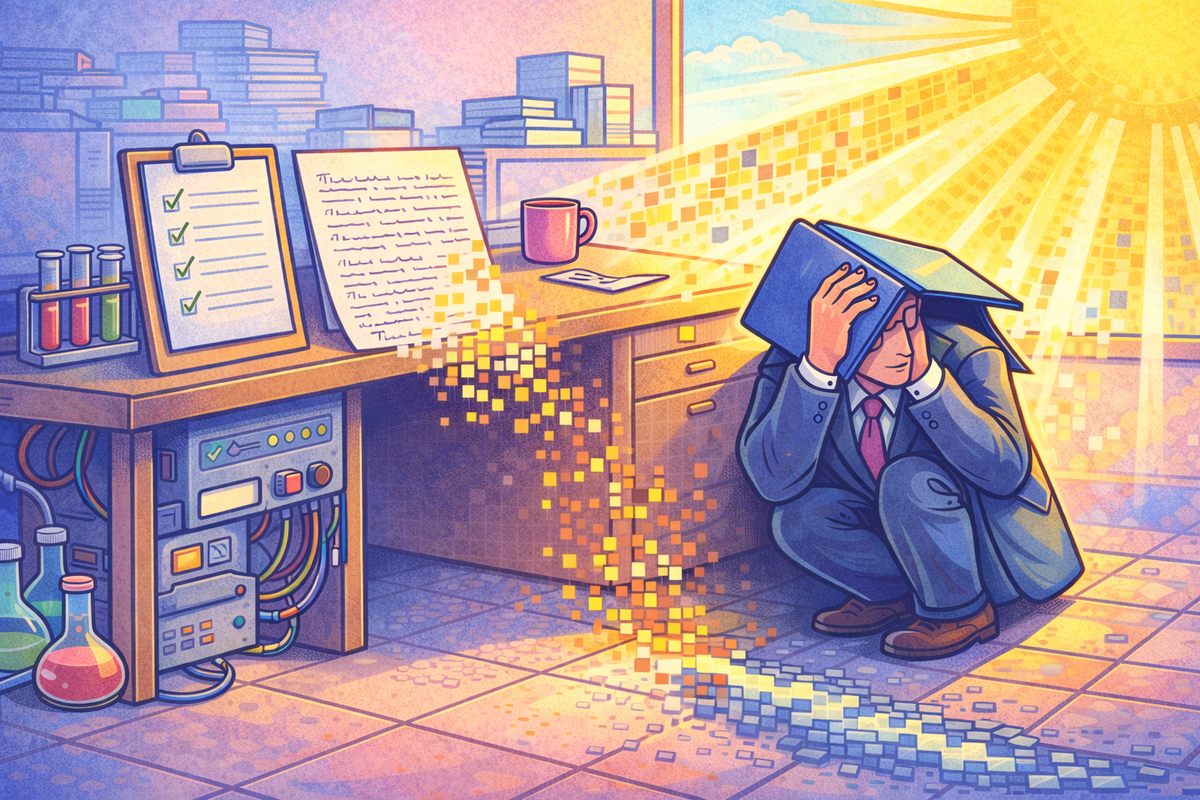

A couple weeks ago I was off work with pneumonia. After hospital tests I was sent home with doxycycline, all standard enough. When I opened the box, one instruction was interesting: avoid exposure to sunlight.

In the UK in late winter (or most of the year) that hasn’t been difficult. Grey skies have done the job. Still, the warning is there because at some point, enough patients reacted under the wrong conditions for someone to realise the pattern mattered.

Now the drug is not experimental, it has been prescribed for decades. It is well studied and widely trusted. Yet one of its side effects only becomes relevant under specific environmental conditions. Combine the drug with strong UV exposure and some patients develop a significant phototoxic reaction. Remove the sunlight and the risk largely disappears.

That warning did not come from doing some abstract modelling, rather it came from observation, reporting, controlled reproduction and eventually mechanism analysis. Over time the safe operating envelope was defined.

The drug was not banned, rather the conditions of risk were mapped.

Legal AI needs the same treatment.

Benchmarks Are Comforting. They Are Not Control.

Most legal AI conversations still revolve around performance claims. Accuracy scores. Benchmark rankings. Claims that a model performs at junior or mid level.

Benchmarks are useful. They show how a system behaves under defined test conditions. They do not show how it behaves when the environment changes.

That distinction matters in professional services.

A clause extraction model can score highly on a curated dataset of clean agreements. That tells you it works in a lab. It does not tell you what happens when:

- Definitions are buried in annexes

- Amendments have accumulated over years

- Drafting quality is inconsistent

- US and UK terminology are mixed

- Retrieval fails to surface a key clause

- Context limits are pushed to their edge

Those are not insane scenarios, they are normal day to day live matter conditions.

If performance degrades under those inputs, the issue is not that the tool is flawed (though there's always room for improvement), it's whether you understand how and when that degradation happens.

Without that, you are operating on hopes and dreams, much like my lungs were.

What a Degradation Curve Actually Means

In medicine, risk is not described as a single number. It is mapped against variables: Dose, exposure, patient characteristics.

In legal AI, the equivalent is mapping performance against complexity.

- How does extraction accuracy change as document length increases?

- At what point does hallucination risk increase when definitions are missing?

- How sensitive is output to minor prompt variation?

- What happens to risk scoring when drafting becomes deliberately ambiguous?

- How does retrieval accuracy influence downstream reasoning stability?

If you cannot answer those questions empirically, you do not understand your system well enough to scale it responsibly.

A degradation curve turns a vague sense of risk into something operational. If accuracy drops materially beyond a defined complexity threshold then you can design controls around that threshold. You can require mandatory review, or you can constrain deployment and adjust workflows.

A static benchmark score cannot do that.

The Discipline Most Firms Are Missing

In practice, AI failures are often treated as isolated annoyances. A lawyer spots an omission, corrects it, tweaks the prompt and moves on. The moment passes.

What is usually missing is structured capture.

At minimum, that means logging:

- The exact prompt and configuration

- The model and version

- The input document and context

- The expected output

- The actual output

- The materiality of the deviation

Without systematic capture, you cannot identify patterns. Without patterns, you cannot characterise degradation. Without characterisation, governance becomes theatre.

Pharmacovigilance (crazy word) works because adverse reactions are not shrugged off. They are recorded, aggregated and analysed, then over time, correlations emerge and the risk profile becomes much clearer.

Legal AI should be no different.

Reproducibility Before Confidence

Once incidents are logged, they need to be interrogated.

Re run the same prompt and document across model versions. Observe whether the issue persists. Adjust configuration deliberately and measure sensitivity. If a slight change in temperature or phrasing materially shifts output, that tells you something about stability.

This is not about chasing perfection. It is about understanding variance.

Beyond reproducibility, mechanism matters. When something fails, where did it fail?

- Was retrieval incomplete?

- Was chunking misaligned with clause boundaries?

- Did the prompt create implicit incentives for speculation?

- Did the model overweight structural headings and ignore cross references?

Even partial answers allow you to tighten the system. Change the retrieval strategy, adjust chunking, constrain the prompt or just introducing validation checks.

Without mechanism, you are left with guesswork and patchwork fixes.

Guardrails Should Follow Evidence

Once degradation behaviour is understood, controls become rational rather than reactive.

You might decide that:

- Low risk summarisation tasks remain suitable for supervised automation

- Financial extraction above a defined document length triggers mandatory human verification

- Case law outputs require enforced citation validation

- Model version identifiers are embedded in output artefacts for audit traceability

These are not admissions of weakness, they are signs of understanding and maturity.

Doxycycline continues to be prescribed because clinicians understand the conditions under which adverse reactions are more likely. They can explain the trade offs clearly and then patients can adjust behaviour accordingly.

Trust is built on defined boundaries.

Legal AI does not need to be flawless to be valuable. It needs to be predictable within defined operating conditions.

Benchmarks tell you how a system performs in a controlled setting. Degradation curves tell you how it behaves under stress. In regulated environments, the latter matters more.

If you cannot describe where performance bends, where it drops and what controls activate when it does, you are not governing the system. You are deploying it optimistically.

Understanding where the sunlight hits is not a flaw in the technology. It is the difference between experimentation and engineering.

If we are serious about scaling AI in legal practice, engineering discipline has to win.

Predictability is the real objective here.